Last week at Mozfest 2019 we ran a session with the title “Let’s optimise for Interoperability, not decentralisation”. ~25 people came together to discuss what is required to foster an interoperable system from the perspective of  technology,

technology,  business models/regulation and

business models/regulation and  communication.

communication.

There are many different areas interoperability can be applied, too many to discuss in such a short period of time. In our discussions we focused on the aspects of data and social portability in social media, chat and knowledge management applications.

Setting the context

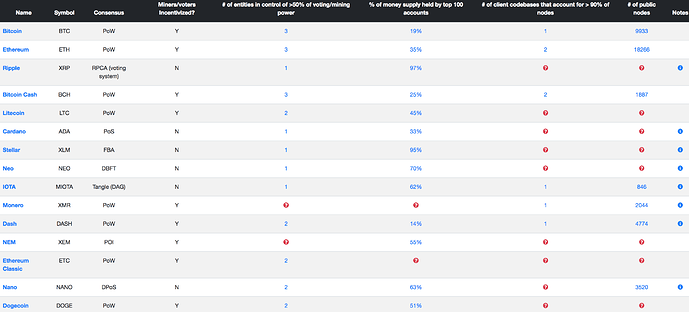

In the past few years “decentralisation” was the buzzword in the open source community. Blockchains, new databases and other p2p approaches promised to help rebuilding trust between people and institutions (counter-intuitively by not needing to trust anyone anymore), ensure privacy and break the power of centralised monopolies.

Breaking the power of centralised monopolies and rebuilding trust in each other and vital institutions, is what I’d like to see too. However I don’t see focussing on the above mentioned decentralised technologies being the right lever to do that.

After all the web is already decentralised, but still it managed to become highly centralised and trust being diminished. Why should it change with those ecosystems, especially given the fact that many are already more centralised than regular capitalistic ecosystems.

So it is more of a question: How can we make the web less centralised and how can we get better institutions that are partially centralised, but that people can trust?

I believe the gateway to get there is a joint focus of our community and the open-source community at large to provide data and social portability: the ability to migrate your data and your social graph to different services.

It would allow users to leave services and migrate to others more easily and still communicate with their friends on the old services. Imagine creating a new Facebook app with all your existing data in it and still being able to talk to your friends using the old app.

This would put market pressure on more ethical, trustworthy and better services, not through a top down regulatory force (blockchains/smart contracts) but through emergent free market dynamics. If decentralised components deliver real life utility they could also naturally emerge, but they are not the base line anymore.

Data and social portability would also offer a value proposition to that centralised monopolies can’t compete with: Freedom and better tools for the individual.

It would naturally diminish the degree of users and power any individual service can amass. This is because people could migrate to solutions that provide most value to them which is individual to every person. Thus the maximum target group of a service has a very natural limit. If Facebook would have such open APIs it would never be able to retain 2 billion users. They do now because they have been incredibly good at creating network effects and lock-ins - not because they are the best service for each of its 2 billion users.

The Discussion Rounds

The Discussion Rounds

People split into 3 groups, each looking at the question of what is required to foster an interoperable system from the perspective of  technology,

technology,  business models/regulation and

business models/regulation and  communication. After 45min each group was sharing the gist of their conversation with the larger group.

communication. After 45min each group was sharing the gist of their conversation with the larger group.

Initially people came in with their own set of questions into the session:

- What are we talking about when we say interoperability vs decentralisation vs p2p web?

- Upside and downsides of each of the model?

- What are the synergies and tensions between interoperability and decentralisation?

- What are the building blocks of an open web?

- Geopolitical and geoeconomical effects of effects of interoperability?

- Who is in control and power?

- Interoperability between tools and how that enables innovation

- What are communities that are working on interoperability?

- How does standardisation help to foster interoperability?

- Digital data ecosystems from a lawyer perspectives

- Minimum viable interoperability . How to lower the barriers to start with the smallest possible set of people to build interoperable things?

- Decentralised technologies? How to solve data privacy issues?

- Decentralisation to integrate many stakeholders to solve problems?

TECHNOLOGY:

TECHNOLOGY:

- What technological options do we have to create more data and social portability?

- Where are the limitations and challenges?

Suggestions:

- The goal must be:

- A user has the right of migrating 100% of their user generated data

- A user has the right of migrating their social graph, and still communicating with their social network via old APIs

- As Irina Bolychevsky (@shevski) has written in her recent blog post, what we need is a set of APIs that would at least allow to move data around as we see the need. This could be a step-by-step process of gradually opening the most vital APIs to your personal data.

- Overtime it is a must to develop open standards for things like messaging threads, social posts that organisations need to make queryable, not just exportable in a file like Facebook does now.

- Discoverable extensibility.

Every software should be able to add types of data that makes sense in its own context. However not every software would be able to recognise every piece of data that comes to them. this could be solved with the receiver software detecting hidden data and allow users to install additional software/plugins to display the data or not. - Develop testable API design standards for social data, much like the Acid tests for browser APIs

- Discoverable extensibility.

- Wide adoption of user centric design practices, data storage and computing would allow more flexible transformation of data into different formats and lessen the need for standardisation, a process that oftentimes can end up being very political

- A challenge is the difficulty to create use case specific requirements like ‘regulating’ Facebook differently than Twitter because of the different data they have. There are too many companies for this level of customisation, but you could start with the most powerful ones.

FUNDING / BUSINESS MODELS / REGULATION

FUNDING / BUSINESS MODELS / REGULATION

- What kind of business & economic models are there that would support the principles of data and social portability?

- What policy decisions could help to create more interoperability?

Suggestions:

- When creating new organisations, use non-monopolising economic models like Steward Ownership where companies can never be sold and investors are rewarded with a capped profit share This shifts from a growth-centric business logic to a service-centric one. Good service would result in profits. This could remove economic incentives to lock people and their data in and instead align them with interoperability

- Provide state-side funding for companies that use non-monopolising economic models like Steward Ownership, Zebra (not Unicorn) organisations.

- Provide state-side funding, tax-breaks or yearly fines for organisation that (not) adhere to a level of interoperability and make data available in accepted standards. Difficulty is to find a level of tax breaks or fines to compete with the profits companies make by not creating interoperable standards. Historically big organisations are happy to pay a fine instead of losing growth.

- We often think that innovation comes from the market only, missing the vital part state-side funding plays in both groundbreaking innovation and early stage funding for startups (e.g. Google & Facebook have been funded by the US government)

- To not punish smaller organisation with regulatory nightmare, force companies with a certain size to provide a certain level of open APIs for personal data. (e.g. above 100k users or 100k of revenue/month)

- New open-source licenses that allow charging licensing fees to for profit use. This would allow (open source) software providers to still benefit from their software being used by competitors and thus offering flexibility to work more in the open.

- Source available licenses

- Peer Production Licenses

COMMUNICATION TO USERS

COMMUNICATION TO USERS

- How can we communicate the value of portability?

- What real life effects does it have on non-technical people?

Suggestions:

- An important piece of the communication journey was to define the key terms we would like to communicate, then how:

- Data ownership

- Data portability

- Data rights

- Data commons

- We found that a powerful way of communicating the value of interoperability, data and social portability can be shown in real life examples.

- Email: You can use any software or email provider and communicate with each other. Migration is relatively easy from one provider to the other.

- Money: You can buy at any shop you want and thus put economic pressure on innovation & better services. Ask people: Why don’t we have the same freedom with our most valuable data?

- The benefits people will have is:

- Freedom to leave services without leaving their friends

- Use the tools you want to use without wondering what their friends use. Example: Telegram and Whatsapp - I should not care what you use.

- More service competition possible - More choices, better quality!

- However there are also the challenges about responsibility. Many people don’t want to own their data entirely because they don’t want to be responsible for losing it. So they desire more a right to control it, than to own it. Data commons or stewards could solve this because they could be institutions or hosting providers that take care of people’s data.

- Communicating data ownership or control as a human right seems to be a vital step to emotionally connect people to the value of their data.

Thanks to:

Thanks to:

- Gemma Copeland from CommonKnowledge Coop for providing me with her notes on the ‘Communication’ Group.

- Everyone who participated and added their thoughts to this process